The Slide That Could Save Everyone — If the Model Works for Everyone

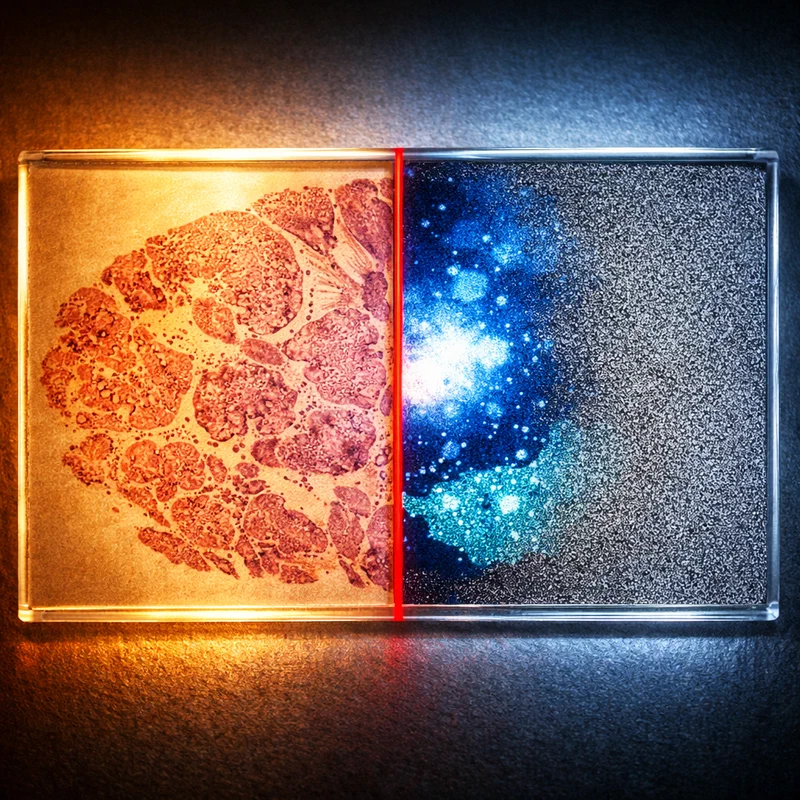

The H&E slide is pathology’s great equalizer. One glass slip, a fraction of a cent in stain, a trained technician — and every hospital on the planet can see cancer. AACR 2026, which wrapped last Tuesday in San Diego, made a spectacular claim on top of that equalizer: with AI, that same penny-stain slide can now tell you everything a $5,000 genomic panel tells you. The democratization pitch is irresistible. Nobody selling it is talking about the flaw.

What the Conference Declared #

The American Association for Cancer Research’s 2026 Annual Meeting ran April 17 through 22, drawing nearly 9,600 accepted abstracts — and roughly 12 percent of them were AI-related. That number alone tells you something has changed structurally. Three years ago, the typical AI abstract at AACR was a single-center proof-of-concept: 200 H&E slides, a convolutional network, a modest AUC. At AACR 2026, according to an analysis by Miraei AI of the full abstract corpus, the field has shifted from isolated experiments to “embedded operational systems.” Approximately 225 abstracts used language suggesting real-world clinical validation or deployment.

The plenary session dedicated to AI in cancer research was among the conference’s most watched. Faisal Mahmood of Harvard Medical School presented TITAN, a platform analyzing histopathology slides to generate pathology reports, and Apollo, a whole-patient foundation model trained on millions of patient records across multiple hospital systems. The ambition: represent patients virtually across their entire treatment timeline, making the full complexity of a patient’s history searchable and predictive.

The standout clinical result came from MD Anderson. Their Path-IO model, presented by Rukhmini Bandyopadhyay, predicts immunotherapy response in metastatic non-small cell lung cancer from routine pathology slides — using tissue samples that are already routinely gathered, without any additional testing. In historical validation cohorts, Path-IO separated patients into high-risk and low-risk groups, with high-risk patients facing double the risk of death or disease progression. Across external validation datasets spanning more than 1,000 patients at multiple institutions and countries, Path-IO significantly outperformed PD-L1 testing — the current standard-of-care biomarker for immunotherapy eligibility.

That last point deserves emphasis. The current standard, PD-L1 expression, was, in some of the validation groups, as predictive as flipping a coin. Path-IO was meaningfully better. And it ran on a slide that was already sitting in the pathology lab.

Bioptimus, a Paris-based AI startup, presented H-optimus-1 as a late-breaking abstract at the same meeting: a 1.1 billion parameter Vision Transformer trained on more than one million whole-slide images from over 800,000 patients across more than 4,000 clinical centers. The model predicts cancer biomarkers, gene mutation status, spatial gene expression, and survival outcomes — all from H&E alone. The stated vision is a world where precision pathology no longer requires specialized staining protocols or genomic sequencing infrastructure.

The democratization pitch writes itself. Lung cancer kills 1.8 million people annually; more than 80 percent of those deaths occur in low- and middle-income countries, where next-generation sequencing is not routinely available. If an AI model can extract EGFR mutation probability, HER2 status, and immunotherapy response prediction from the H&E slide already being processed in a district hospital in Lagos or Chennai, that is precision oncology for the two billion people who currently have no access to it.

The Study That Wasn’t on the Conference Floor #

Except there was a paper published in Cell Reports Medicine in early 2026 that was not prominently discussed at AACR, and it matters enormously for this story.

Researchers at Harvard Medical School and Brigham and Women’s Hospital, led by associate professor of biomedical informatics Kun-Hsing Yu, evaluated four commonly used deep-learning pathology models across 20 cancer types using a large multi-institutional dataset. They found consistent performance gaps linked to patient demographics. Diagnostic accuracy was lower for specific groups defined by race, gender, and age in roughly 29 percent of the diagnostic tasks analyzed. The models struggled to distinguish lung cancer subtypes in African American patients. They showed reduced accuracy classifying breast cancer subtypes in younger patients. Detection performance dropped for renal, thyroid, and stomach cancers across specific demographic groups.

The finding that stopped me was this: the bias wasn’t simply a data imbalance problem. The AI models could actively extract demographic information — race, age, sex — from pathology slides that human pathologists cannot read for those characteristics. “Reading demographics from a pathology slide is thought of as a ‘mission impossible’ for a human pathologist,” Yu said. The AI was learning demographic shortcuts and using them, which introduced disparities into its diagnostic reasoning.

More data won’t straightforwardly solve this. That is the part that isn’t being said loudly enough at conferences like AACR.

Why Biology Isn’t Bias-Neutral #

The reason AI can read demographics from tissue is that cancer biology genuinely differs across populations — and the differences are real, not artifacts of healthcare access disparities alone.

EGFR mutations are present in 10 to 15 percent of NSCLC patients in Western populations. In East Asian patients, that figure is 40 to 50 percent. The morphological fingerprint of EGFR-mutant adenocarcinoma in lung tissue is distinct from EGFR-wild-type adenocarcinoma. A model trained primarily on Western patient data learns the EGFR-low tissue fingerprint as its baseline. Apply it to a district hospital in Vietnam or Indonesia, where the patient population has a completely different EGFR prevalence, and the model is working from the wrong biological prior.

Triple-negative breast cancer, the most aggressive subtype, is approximately twice as prevalent in Black women as in white women. The stromal tumor-infiltrating lymphocyte density — a key variable in several AI biomarker models, including the Lunit studies presented at AACR — differs by subtype. A model that learned the spatial immune architecture of hormone-receptor-positive breast cancer in predominantly white patients will not generalize cleanly to triple-negative disease in populations with higher TNBC burden.

This is not a problem of flawed model architecture. It is a problem of training distribution mismatch. The model is right about what it learned. It learned the wrong population.

What H-optimus-1’s 4,000 Centers Actually Represent #

Bioptimus’s claim of training on data from 4,000+ clinical centers sounds like coverage. But the critical question — where are those centers geographically — was not prominently addressed in the abstract or the press coverage that followed.

The history of large-scale medical AI training datasets is not encouraging on this point. The TCGA (The Cancer Genome Atlas), which underpins much of computational pathology research, has been criticized for over-representing patients from US academic medical centers. Analysis of TCGA demographics has shown that non-Hispanic white patients constitute approximately 65 percent of the dataset. One study estimated that if TCGA were a country, it would be demographically closest to the United States in the late 1990s.

The Cancer Imaging Archive, another major source for radiology and pathology AI training, skews similarly. Neither is a meaningful representation of the cancer patient populations in sub-Saharan Africa, Southeast Asia, or South Asia — regions where the cancer burden is rising fastest and the diagnostic infrastructure is thinnest.

When Bioptimus presents a model as a platform for global pathology democratization, the onus is on them to demonstrate performance in the populations that phrase implies. “Validated across multiple datasets” and “validated in diverse patient populations that represent global cancer burden” are two different claims.

What Responsible Deployment Requires #

I am not arguing that computational pathology AI is worthless or that the AACR 2026 results are fabricated. Path-IO’s performance in over 1,000 patients at multiple institutions is a genuine achievement. H-optimus-1’s training scale is the largest in the field’s history. The trajectory is correct.

But trajectory is not equivalence. There is a specific set of requirements that must be met before a technology is deployed in low-resource settings as a democratizing intervention:

Demographic-stratified performance reporting should be mandatory, not optional. The FAIR-Path framework developed by Yu’s team reduced diagnostic disparities by approximately 88 percent without requiring perfectly balanced training datasets. That intervention costs implementation effort, not data collection budgets. It should be standard in every model validation submission.

Prospective validation in target deployment populations must precede deployment. If H-optimus-1 is going to be used in a clinical pathway in Indonesia, it needs to be tested prospectively in Indonesian patients, not just validated on held-out US or European data.

The geographic composition of training datasets should be disclosed. Not at the level of “4,000+ clinical centers” but at the level of geographic distribution, scanner type diversity, and demographic composition. This is the analog of Phase III trial population disclosure.

Lunit, whose six AACR presentations included collaborations with Agilent Technologies and Ajou University Medical Center in South Korea, is closer to geographic diversity than most Western-origin competitors. The MOUNTAINEER trial analysis — finding that AI-quantified HER2 expression predicted tucatinib response with ORR as high as 80 percent in high-HER2-expression patients — is one of the more clinically grounded AI pathology results in recent memory. But Lunit’s coverage skews toward lung and colorectal cancer in East Asian cohorts. Sub-Saharan Africa remains essentially absent from the entire AACR AI landscape.

The Test That Matters #

The H&E slide doesn’t discriminate. It stains every cancer the same way. The AI reading it might — and the places where it will fail most are precisely the places where it was never trained.

The AACR 2026 AI pathology revolution is real. The democratization promise it carries is conditional. The condition — performance equity across the populations who most need this technology — was mostly invisible on the conference floor last week, despite being the single most important variable for global clinical impact.

When the field next assembles to celebrate another 12 percent AI-abstract share, I want to see a dedicated session on geographic validation gaps, not as an equity addendum, but as core science. Because a diagnostic model that fails for the majority of the world’s cancer patients isn’t a precision tool. It’s a precision exclusion.

References #

-

AACR Meeting News (April 22, 2026). “Third Plenary Session Examined the AI Revolution Across Cancer Labs and Clinics.” https://www.aacrmeetingnews.org/third-plenary-session-examined-the-ai-revolution-across-cancer-labs-and-clinics/ (Accessed April 28, 2026)

-

Miraei AI (April 2026). “AI in Oncology — AACR 2026 Intelligence Brief.” https://aacr2026-ai-insights.miraei.ai/ (Accessed April 28, 2026)

-

ecancer (April 2026). “AACR 2026: New platform uses machine learning to predict responses in patients with lung cancer (Path-IO, MD Anderson).” https://ecancer.org/en/news/28091-aacr-2026-new-platform-uses-machine-learning-to-predict-responses-in-patients-with-lung-cancer (Accessed April 28, 2026)

-

Medical Xpress (April 2026). “AACR: New platform uses machine learning to predict responses in patients with lung cancer.” https://medicalxpress.com/news/2026-04-aacr-platform-machine-responses-patients.html (Accessed April 28, 2026)

-

Lunit (April 17, 2026). “Lunit to Present Six AI Studies at AACR 2026 Highlighting Advances in Precision Oncology and Real-World Clinical Application.” https://www.lunit.io/en/media-hub/lunit-to-present-six-ai-studies-at-aacr-2026-highlighting-advances-in-precision-oncology-and-real-world-clinical-application/ (Accessed April 28, 2026)

-

Seoul Economic Daily (April 21, 2026). “LG’s Agentic AI Cuts Cancer Treatment Design from 4 Weeks to 1 Day.” https://en.sedaily.com/finance/2026/04/21/lgs-agentic-ai-cuts-cancer-treatment-design-from-4-weeks-to (Accessed April 28, 2026)

-

Dark Daily (January 7, 2026). “AI Cancer Diagnostics Struggle with Equity, Study Finds.” https://www.darkdaily.com/2026/01/07/ai-cancer-diagnostics-struggle-with-equity-study-finds/ (Accessed April 28, 2026)

-

Scalbert et al. (April 2026). “Abstract LB174: H-optimus-1: A Foundation Model for Computational Histopathology.” AACR Annual Meeting 2026. https://aacrjournals.org/cancerres/article/86/8_Supplement/LB174/783174/Abstract-LB174-H-optimus-1-A-foundation-model-for (Accessed April 28, 2026)

-

Nature Medicine / HEX model (2025). “AI-enabled virtual spatial proteomics from histopathology.” https://www.nature.com/articles/s41591-025-04060-4 (Accessed April 28, 2026)

AI-Generated Content Notice

This article was created using artificial intelligence technology. While we strive for accuracy and provide valuable insights, readers should independently verify information and use their own judgment when making business decisions. The content may not reflect real-time market conditions or personal circumstances.

Whenever possible, we include references and sources to support the information presented. Readers are encouraged to consult these sources for further information.

Related Articles

Signals & Shifts: The Hiring Split-Screen Is Here

Hiring is slow overall, but demand for AI-adjacent capability is accelerating, creating a …

LinkedIn's Most Important Reader in 2026 Isn't Human

LinkedIn’s real audience in 2026 isn’t the recruiter scrolling your feed—it’s the …

Your AI Has Already Been Briefed. You Weren't in the Room.

AI recommendation poisoning is already in production across 31 companies and 14 industries. …