The AI Agent Flood in Healthcare: HIMSS 2026 and the Validation We're Skipping

Something extraordinary happened in Las Vegas this week, and I don’t mean the poker tables. HIMSS 2026 — one of health IT’s largest annual gatherings — became a showcase for an unprecedented wave of AI agents, each promising to transform how medicine is practiced, documented, and billed.

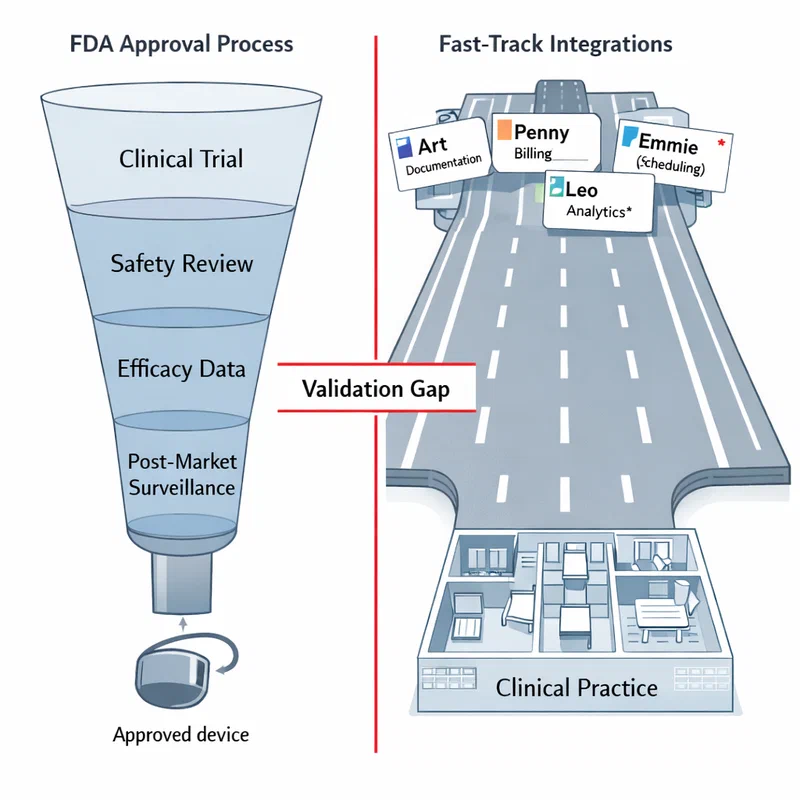

Epic Systems, the nation’s dominant electronic health records vendor, arrived with three named agents: “Art,” who takes clinical notes and drafts documentation; “Penny,” who manages hospital billing and fights coverage denials; and “Emmie,” who answers patient questions and books appointments. Oracle rolled out its own agent designed to assist physicians across 30 medical specialties, suggesting next steps and drafting notes in real time. Amazon announced that its “Health AI” agent — built in partnership with One Medical clinicians — is now available free to any U.S. resident, with Prime members eligible for up to five free consultations with a licensed clinician directly within the AI experience.

Google and Microsoft added their own offerings to the mix. By the end of the conference floor’s first day, it was genuinely difficult to count how many new AI agents had been introduced into clinical settings. As STAT News reported on March 11, AI agents are “proliferating in health care faster than they can be counted.”

The Amazon Benchmark: Clinician Partnership Done Right #

Before I raise the alarm, let me acknowledge what Amazon One Medical has done differently. In an interview at HIMSS, Dr. Andrew Diamond, their chief medical officer, described how Health AI was developed in “deep partnership with a real clinical workforce” — primary care physicians working alongside engineers and operational leaders. The agent does not simply redirect patients to search results; when it identifies a need for clinical care, it connects users directly with One Medical clinicians who can diagnose and prescribe within the same session.

That is a meaningfully different design philosophy from building an AI system and then asking doctors to use it. It should be the standard. It largely is not.

When Speed Outpaces Safety: The Billing Crisis #

The same week as HIMSS, Blue Cross Blue Shield released a sobering analysis. Researchers from its data analytics arm, Blue Health Intelligence, examined claims data from tens of thousands of maternity admissions. They found a striking pattern: at hospitals using AI-assisted clinical documentation, diagnoses for acute posthemorrhagic anemia — a serious condition requiring blood transfusion — had risen sharply. Yet the rate of actual transfusions had barely moved.

In plain terms: the AI was coding patients as having a dangerous condition, and then those patients received no treatment for it. The condition either wasn’t present or wasn’t serious enough to treat. But the coding generated significantly higher billing.

The study, published by BCBS in March 2026, found that AI-driven upcoding of this single diagnosis alone added $22 million to maternity admission costs in a single year. Extrapolated across all complex conditions, BCBS estimated the broader AI upcoding phenomenon may be behind $663 million in excess inpatient spending.

“Something is disconnected,” said Dr. Razia Hashmi, VP of Clinical Affairs at BCBSA. “Among hospitals showing the fastest rise in diagnoses of post-partum anemia, the rise in patients coded with this condition wasn’t paired with the level of care we would have expected.”

This isn’t an edge case. STAT News reported on the study and noted that health insurers have been raising the AI upcoding alarm since last summer, but until this BCBS analysis, no one had offered public proof. Now we have it.

The AI documentation systems involved — ambient listening tools that automatically translate clinical conversations into billing codes — were never designed to inflate bills. But optimizing for code completeness without clinical validation of each generated code creates exactly this outcome. The AI sees a symptom mentioned in passing, adds a code, and no one reviews it.

A Genuinely Different Bet: World Models for Safe Clinical AI #

Not everyone is racing to market with LLM-based agents. One of the most interesting developments this week came from outside the conference floor.

Nabla, an AI documentation company whose ambient AI assistant is deployed across hundreds of U.S. health systems, announced an exclusive strategic partnership with Advanced Machine Intelligence (AMI) — the new company from former Meta chief AI scientist Yann LeCun, which just raised $1.03 billion. As STAT News reported and Nabla’s team detailed, the bet is on “world models” — AI systems that learn abstract representations of how complex environments function, rather than probabilistic text prediction.

The clinical argument is compelling. Standard LLMs produce outputs that are statistically likely, not necessarily accurate. Healthcare demands determinism: when a physician reviews an AI-drafted note, they need to trust it reflects reality, not the most plausible sequence of medical words. World models, Nabla’s team argues, offer a path to “safe, deterministic, auditable decision-making” — the kind of traceable reasoning that regulators and clinicians can actually verify.

This is the right framing for the problem. I don’t know yet whether world models will deliver on that promise in clinical settings. But I am glad someone in this space is asking the right question: not “how do we deploy fastest?” but “how do we build something auditable?”

The Language Access Dividend #

One HIMSS story that received less attention than the agent announcements also deserves mention. Healthcare IT News covered a session where Nuvance-Northwell Health described how Oracle’s AI translation tools are helping clinicians deliver discharge instructions in patients’ native languages — a seemingly simple capability with enormous patient safety implications.

“Every single language in the world is spoken by someone in this region,” said Dr. Albert Villarin, the health system’s CMO. Patients who don’t fully understand their discharge instructions have higher readmission rates for conditions like heart failure. Getting a correct translation automatically — trained on medical terminology, medication codes, and FHIR data standards — is not glamorous AI. But it is genuinely useful AI with a measurable safety outcome.

This is what I want to see more of: AI that solves a known, specific clinical problem, with outcomes that can be measured before and after deployment.

The Framework We Need Now #

I’ve spent fifteen years watching AI enter clinical medicine. What concerns me most in this HIMSS moment is not that the technology is bad — much of it is genuinely impressive. What concerns me is the absence of a shared validation standard.

When a diagnostic algorithm goes to the FDA for clearance, it must demonstrate clinical performance on a representative population, disclose limitations, and be monitored post-deployment. When an AI agent that influences clinical documentation, billing, and patient communication deploys via a software contract, none of those requirements apply.

The BCBS upcoding study shows what happens in that gap. Patients get diagnoses added to their records that don’t reflect their care. Insurers pay for conditions that weren’t treated. Premiums rise. And the patients most likely to have their records silently changed are the ones least equipped to push back.

We are at the moment where decisions made in the next 12 to 18 months will determine whether AI becomes a genuine equalizer in clinical care, or a new vector for entrenching the disparities that already define our health system. I am watching HIMSS 2026 with hope, and with a great deal of attention.

References

- Casey Ross, STAT News (March 11, 2026). “AI agents are rapidly spreading in health care, but validation is lacking.” https://www.statnews.com/2026/03/11/ai-agents-himss-google-microsoft-epic-oracle/ (Accessed March 12, 2026)

- Jessica Hagen, Healthcare IT News / MobiHealthNews (March 11, 2026). “Q&A: Amazon One Medical chief medical officer on Health AI release.” https://www.healthcareitnews.com/news/qa-amazon-one-medical-chief-medical-officer-health-ai-release (Accessed March 12, 2026)

- Blue Cross Blue Shield Association / Blue Health Intelligence (March 2026). “AI Boosting Hospital Billing.” https://www.bcbs.com/news-and-insights/report/ai-boosting-hospital-billing (Accessed March 12, 2026)

- Brittany Trang, STAT News (March 9, 2026). “Payer data suggests AI is driving up healthcare costs.” https://www.statnews.com/2026/03/09/bcbs-study-hospitals-use-ai-upcoding-drive-up-prices/ (Accessed March 12, 2026)

- Casey Ross, STAT News (March 10, 2026). “Health AI startup to benefit from $1 billion funding round for Yann LeCun’s AMI.” https://www.statnews.com/2026/03/10/ai-ami-yann-lecun-nabla-lebrun-world-model/ (Accessed March 12, 2026)

- Nabla Team (March 10, 2026). “AMI raises $1.03B to build world models — powering the next generation of healthcare AI with Nabla.” https://www.nabla.com/blog/ami-raises-1-03b-to-build-world-models----powering-the-next-generation-of-healthcare-ai-with-nabla (Accessed March 12, 2026)

- Nathan Eddy, Healthcare IT News (March 11, 2026). “Health systems use AI to break down language barriers in patient discharge notes.” https://www.healthcareitnews.com/news/health-systems-use-ai-break-down-language-barriers-patient-discharge-notes (Accessed March 12, 2026)

AI-Generated Content Notice

This article was created using artificial intelligence technology. While we strive for accuracy and provide valuable insights, readers should independently verify information and use their own judgment when making business decisions. The content may not reflect real-time market conditions or personal circumstances.

Related Articles

Claude for Healthcare: The Race Between AI Innovation and Preserving Human-Centered Medicine

As major AI companies race into healthcare with sophisticated tools, the critical question …

Horizon 1000: When AI Meets Africa's Healthcare Crisis Head-On

Horizon 1000’s ambitious plan to bring AI to 1,000 African clinics by 2028 forces us to …

The AI Health Assistant Rush: Why ChatGPT Health and Claude for Healthcare Mark a Pivotal—and Precarious—Moment for Medicine

The January 2026 launches of ChatGPT Health and Claude for Healthcare represent both tremendous …